The rapid growth of artificial intelligence has created powerful tools for generating images and videos that look real but are actually fake. These so-called AI deepfakes are increasingly being used during conflicts, spreading misinformation and confusion online. Now, the oversight body of Meta Platforms has urged the tech giant to rethink how it handles AI-generated content on its platforms. Rising Concern Over AI War Propaganda

The call for change comes as conflicts around the world, including tensions involving Iran, have triggered a flood of viral war footage online. Many of these videos and images appear authentic but later turn out to be created using artificial intelligence. These AI-generated clips can spread rapidly across social media platforms, misleading millions of viewers before they are fact-checked.

Experts warn that such content can shape public opinion, fuel panic, and even influence political decisions during wartime. In the digital age, misinformation can travel faster than the truth, making it difficult for people to distinguish real footage from manipulated media. Oversight Board Criticizes Current Policies

The independent Meta Oversight Board — sometimes called the “Supreme Court of Facebook” — said Meta’s current policies are not strong enough to deal with the growing threat of AI-generated misinformation.

According to the board, the company’s systems for detecting and labeling deepfake videos rely too heavily on voluntary disclosures and limited moderation. As a result, misleading AI-generated videos have been able to circulate widely on platforms such as Facebook, Instagram, and Threads before being flagged or removed.

One example cited by the board involved a fake AI video showing destruction in the Israeli city of Haifa during a conflict. Despite multiple user reports, the video remained online and gathered significant attention before it was recognized as fabricated. The Risk During Wars and Crises

The board emphasized that deepfakes are particularly dangerous during wars, elections, and crises. When tensions are high, people are more likely to believe dramatic footage or shocking claims.

AI-generated content designed to deceive can manipulate emotions, increase engagement on social media, and spread propaganda from state or non-state actors. As the quality and quantity of AI-generated media improve, the impact on society could become even more profound. Recommendations for Meta

The oversight board suggested several changes to Meta’s approach, including:

-

Creating clearer rules for AI-generated content .Expanding labels to clearly identify synthetic media .Improving detection tools for deepfakes Increasing transparency about how the company moderates AI content

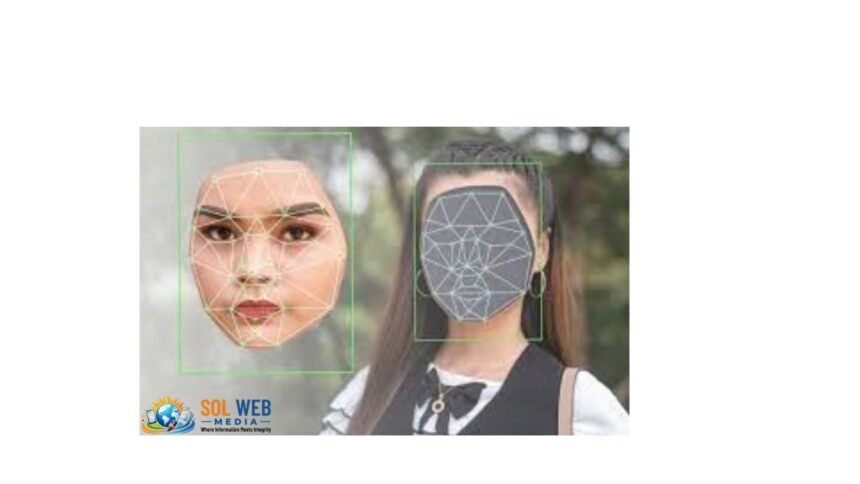

The board also recommended wider adoption of technologies such as content credentials, which allow users to verify whether a piece of media was created or altered using AI. A Global Challenge for Social Media

The debate highlights a broader challenge for social media companies. As AI technology becomes more powerful, the line between real and fake digital content is becoming harder to detect.

For platforms like Facebook and Instagram, the challenge is to balance free expression, technological innovation, and the need to prevent misinformation that could destabilize societies during sensitive moments such as wars or elections.

With AI tools becoming widely accessible, experts believe that stronger policies and better detection systems will be necessary to prevent deepfakes from becoming a major weapon in the information wars of the future.